Online Knowledge Distillation via Multi-branch Diversity Enhancement

Zheng Li (Institute of Virtual Reality and Intelligent System, Hangzhou Normal University)*, YING HUANG (Hangzhou Normal University), Defang Chen (Zhejiang University), Tianren Luo (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University), Ning Cai (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University), Zhigeng Pan (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University)

Keywords: Deep Learning for Computer Vision

Abstract:

Knowledge distillation is an effective method to transfer the knowledge from the cumbersome teacher model to the lightweight student model. Online knowledge distillation uses the ensembled prediction results of multiple student models as soft targets to train each student model. However, the homogenization problem will lead to difficulty in further improving model performance. In this work, we propose a new distillation method to enhance the diversity among multiple student models. We introduce Feature Fusion Module (FFM), which improves the performance of the attention mechanism in the network by integrating rich semantic information contained in the last block of multiple student models. Furthermore, we use the Classifier Diversification(CD) loss function to strengthen the differences between the student models and deliver a better ensemble result. Extensive experiments proved that our method significantly enhances the diversity among student models and brings better distillation performance. We evaluate our method on three image classification datasets: CIFAR-10/100 and CINIC-10. The results show that our method achieves state-of-the-art performance on these datasets.

SlidesLive

Similar Papers

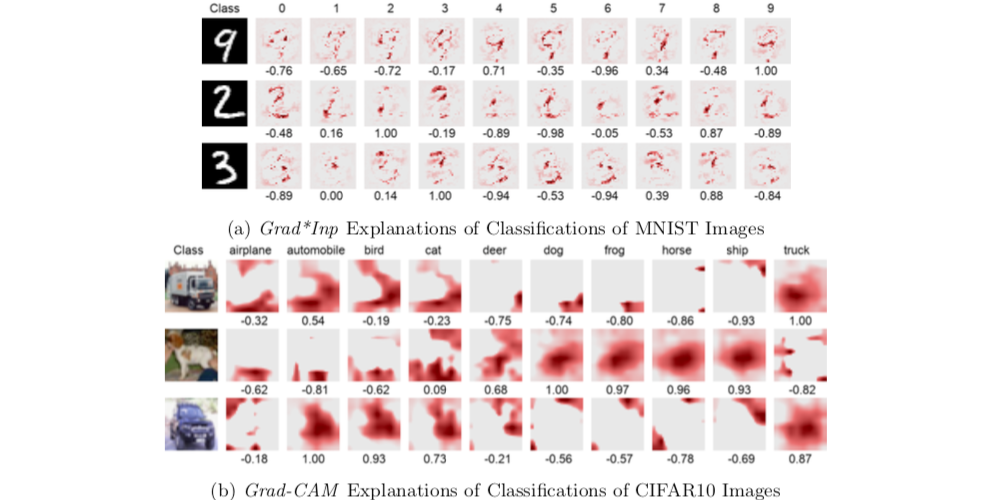

Introspective Learning by Distilling Knowledge from Online Self-explanation

Jindong Gu (University of Munich)*, Zhiliang Wu (Siemens AG and Ludwig Maximilian University of Munich), Volker Tresp (Siemens AG and Ludwig Maximilian University of Munich )

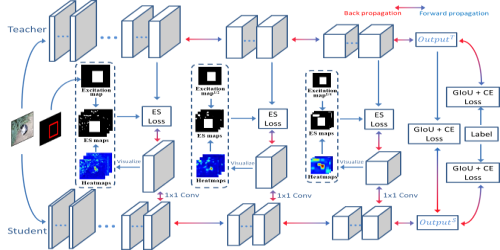

Fully Supervised and Guided Distillation for One-Stage Detectors

Deyu Wang (Canon Information Technology (Beijing) Co., LTD)*, Dongchao Wen (Canon Information Technology (Beijing) Co., LTD), Junjie Liu (Canon Information Technology (Beijing) Co., LTD), Wei Tao (Canon Information Technology (Beijing) Co., LTD), Tse-Wei Chen (Canon Inc.), Kinya Osa (Canon Inc.), Masami Kato (Canon Inc.)

Tracking-by-Trackers with a Distilled and Reinforced Model

Matteo Dunnhofer (University of Udine)*, Niki Martinel (University of Udine), CHRISTIAN MICHELONI (University of Udine, Italy)