Introspective Learning by Distilling Knowledge from Online Self-explanation

Jindong Gu (University of Munich)*, Zhiliang Wu (Siemens AG and Ludwig Maximilian University of Munich), Volker Tresp (Siemens AG and Ludwig Maximilian University of Munich )

Keywords: Deep Learning for Computer Vision

Abstract:

In recent years, many methods have been proposed to explain individual classification predictions of deep neural networks. However, how to leverage the created explanations to improve the learning process has been less explored. The explanations extracted from a model can be used to guide the learning process of the model itself. Another type of information used to guide the training of a model is the knowledge provided by a powerful teacher model. The goal of this work is to leverage the self-explanation to improve the learning process by borrowing ideas from knowledge distillation. We start by investigating the effective components of the knowledge transferred from the teacher network to the student network. Our investigation reveals that both the responses in non-ground-truth classes and the class-similarity information in teacher's outputs contribute to the success of the knowledge distillation. Motivated by the conclusion, we propose an implementation of introspective learning by distilling knowledge from online self-explanations. The models trained with the introspective learning procedure outperform the ones trained with the standard learning procedure, as well as the ones trained with different regularization methods. When compared to the models learned from peer networks or teacher networks, our models also show competitive performance and requires neither peers nor teachers.

SlidesLive

Similar Papers

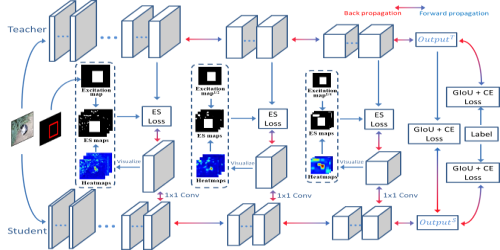

Fully Supervised and Guided Distillation for One-Stage Detectors

Deyu Wang (Canon Information Technology (Beijing) Co., LTD)*, Dongchao Wen (Canon Information Technology (Beijing) Co., LTD), Junjie Liu (Canon Information Technology (Beijing) Co., LTD), Wei Tao (Canon Information Technology (Beijing) Co., LTD), Tse-Wei Chen (Canon Inc.), Kinya Osa (Canon Inc.), Masami Kato (Canon Inc.)

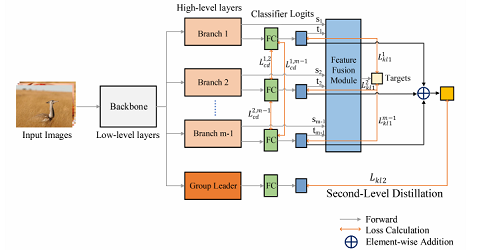

Online Knowledge Distillation via Multi-branch Diversity Enhancement

Zheng Li (Institute of Virtual Reality and Intelligent System, Hangzhou Normal University)*, YING HUANG (Hangzhou Normal University), Defang Chen (Zhejiang University), Tianren Luo (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University), Ning Cai (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University), Zhigeng Pan (Institute of Virtual Reality and Intelligent System,Hangzhou Normal University)

Tracking-by-Trackers with a Distilled and Reinforced Model

Matteo Dunnhofer (University of Udine)*, Niki Martinel (University of Udine), CHRISTIAN MICHELONI (University of Udine, Italy)