ERIC: Extracting Relations Inferred from Convolutions

Joe Townsend (Fujitsu Laboratories of Europe LTD)*, Theodoros Kasioumis (Fujitsu Laboratories of Europe LTD), Hiroya Inakoshi (Fujitsu Laboratories of Europe)

Keywords: Recognition: Feature Detection, Indexing, Matching, and Shape Representation

Abstract:

Our main contribution is to show that the behaviour of kernels across multiple layers of a convolutional neural network can be approximated using a logic program. The extracted logic programs yield accuracies that correlate with those of the original model, though with some information loss in particular as approximations of multiple layers are chained together or as lower layers are quantised. We also show that an extracted program can be used as a framework for further understanding the behaviour of CNNs. Specifically, it can be used to identify key kernels worthy of deeper inspection and also identify relationships with other kernels in the form of the logical rules. Finally, we make a preliminary, qualitative assessment of rules we extract from the last convolutional layer and show that kernels identified are symbolic in that they react strongly to sets of similar images that effectively divide output classes into sub-classes with distinct characteristics.

SlidesLive

Similar Papers

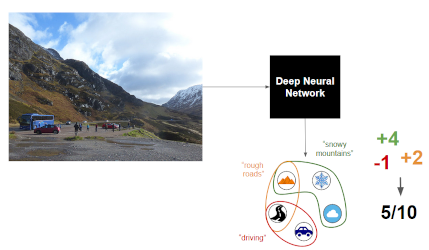

Contextual Semantic Interpretability

Diego Marcos (Wageningen University)*, Ruth Fong (University of Oxford), Sylvain Lobry (Wageningen University and Research), Rémi Flamary (Université Côte d’Azur), Nicolas Courty (UBS), Devis Tuia (Wageningen University and Research)

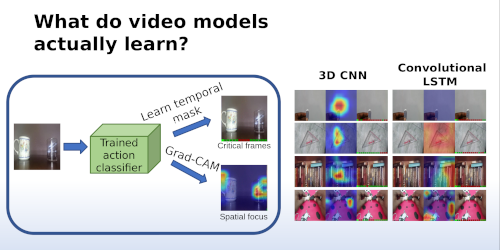

Interpreting Video Features: A Comparison of 3D Convolutional Networks and Convolutional LSTM Networks

Joonatan Mänttäri (KTH Royal Institute of Technology), Sofia Broomé (KTH Royal Institute of Technology)*, John Folkesson (KTH Royal Institute of Technology), Hedvig Kjellström (KTH Royal Institute of Technology)

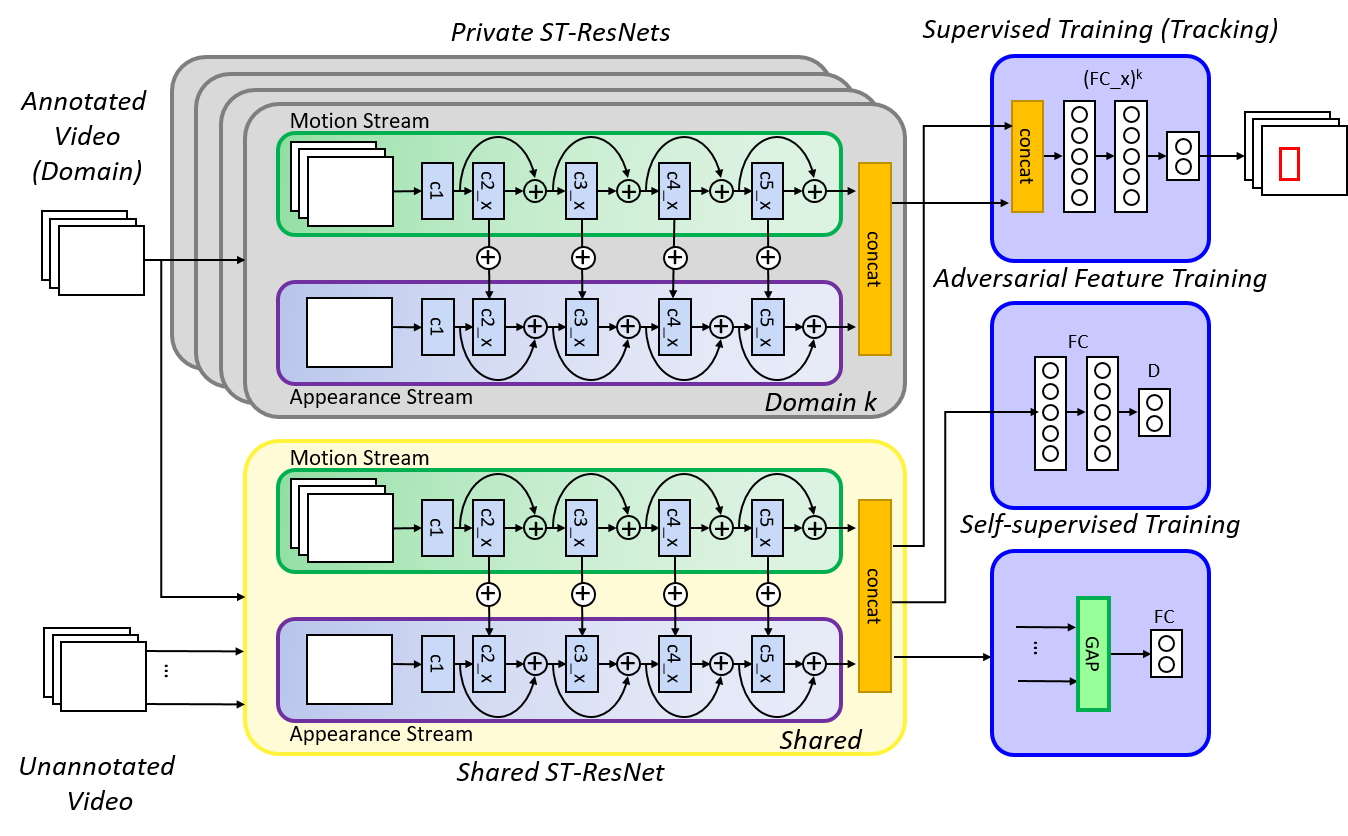

Adversarial Semi-Supervised Multi-Domain Tracking

Kourosh Meshgi (RIKEN AIP)*, Maryam Sadat Mirzaei (Riken AIP / Kyoto University)