Decoupled Spatial-Temporal Attention Network for Skeleton-Based Action-Gesture Recognition

Lei Shi (Institute of Automation, Chinese Academy of Sciences )*, Yifan Zhang (Institute of Automation, Chinese Academy of Sciences), Jian Cheng ("Chinese Academy of Sciences, China"), Hanqing Lu (NLPR, Institute of Automation, CAS)

Keywords: Face, Pose, Action, and Gesture

Abstract:

Dynamic skeletal data, represented as the 2D/3D coordinates of human joints, has been widely studied for human action recognition due to its high-level semantic information and environmental robustness. However, previous methods heavily rely on designing hand-crafted traversal rules or graph topologies to draw dependencies between the joints, which are limited in performance and generalizability. In this work, we present a novel decoupled spatial-temporal attention network(DSTA-Net) for skeleton-based action recognition. It involves solely the attention blocks, allowing for modeling spatial-temporal dependencies between joints without the requirement of knowing their positions or mutual connections. Specifically, to meet the specific requirements of the skeletal data, three techniques are proposed for building attention blocks, namely, spatial-temporal attention decoupling, decoupled position encoding and spatial global regularization. Besides, from the data aspect, we introduce a skeletal data decoupling technique to emphasize the specific characteristics of space/time and different motion scales, resulting in a more comprehensive understanding of the human actions.To test the effectiveness of the proposed method, extensive experiments are conducted on four challenging datasets for skeleton-based gesture and action recognition, namely, SHREC, DHG, NTU-60 and NTU-120, where DSTA-Net achieves state-of-the-art performance on all of them.

SlidesLive

Similar Papers

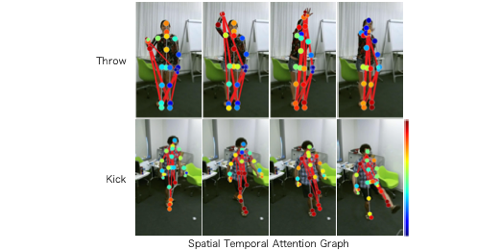

Spatial Temporal Attention Graph Convolutional Networks with Mechanics-Stream for Skeleton-based Action Recognition

Katsutoshi Shiraki (Chubu University)*, Tsubasa Hirakawa (Chubu University), Takayoshi Yamashita (Chubu University), Hironobu Fujiyoshi (Chubu University)

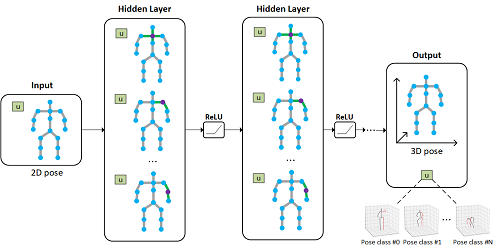

Learning Global Pose Features in Graph Convolutional Networks for 3D Human Pose Estimation

Kenkun Liu ( University of Illinois at Chicago), Zhiming Zou (University of Illinois at Chicago), Wei Tang (University of Illinois at Chicago)*

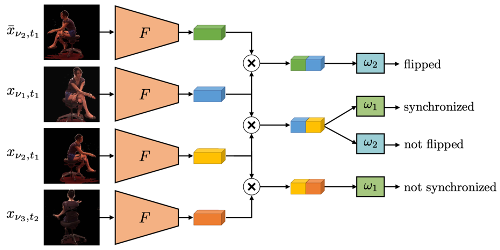

Self-Supervised Multi-View Synchronization Learning for 3D Pose Estimation

Simon Jenni (Universität Bern)*, Paolo Favaro (University of Bern)