Novel-View Human Action Synthesis

Mohamed Ilyes Lakhal (Queen Mary University of London)*, Davide Boscaini (Fondazione Bruno Kessler), Fabio Poiesi (Fondazione Bruno Kessler), Oswald Lanz (Fondazione Bruno Kessler, Italy), Andrea Cavallaro (Queen Mary University of London, UK)

Keywords: Generative models for computer vision ; Video Analysis and Event Recognition

Abstract:

Novel-View Human Action Synthesis aims to synthesize the movement of a body from a virtual viewpoint, given a video from a real viewpoint.We present a novel 3D reasoning to synthesize the target viewpoint. We first estimate the 3D mesh of the target body and transfer the rough textures from the 2D images to the mesh.As this transfer may generate sparse textures on the mesh due to frame resolution or occlusions.We produce a semi-dense textured mesh by propagating the transferred textures both locally, within local geodesic neighborhoods, and globally, across symmetric semantic parts.Next, we introduce a context-based generator to learn how to correct and complete the residual appearance information.This allows the network to independently focus on learning the foreground and background synthesis tasks.We validate the proposed solution on the public NTU RGB+D dataset. The code and resources are available at \url{https://bit.ly/36u3h4K}.

SlidesLive

Similar Papers

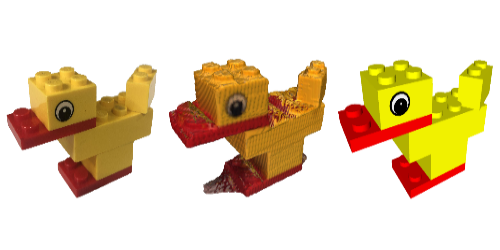

Reconstructing Creative Lego Models

George Tattersall (University of York)*, Dizhong Zhu (University of York), William A. P. Smith (University of York), Sebastian Deterding (University of York), Patrik Huber (University of York)

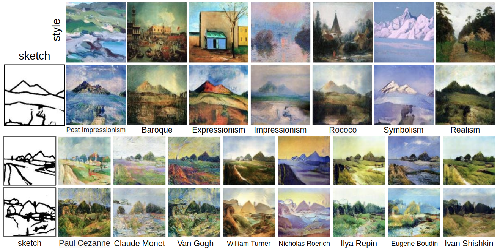

Sketch-to-Art: Synthesizing Stylized Art Images From Sketches

Bingchen Liu (Rutgers, The State University of New Jersey)*, Kunpeng Song (Rutgers University), Yizhe Zhu (Rutgers University ), Ahmed Elgammal (-)

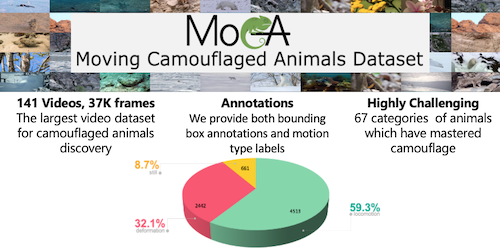

Betrayed by Motion: Camouflaged Object Discovery via Motion Segmentation

Hala Lamdouar (University of Oxford)*, Charig Yang (University of Oxford), Weidi Xie (University of Oxford), Andrew Zisserman (University of Oxford)