Progressive Batching for Efficient Non-linear Least Squares

Huu Le (Chalmers University of Technology)*, Christopher Zach (Chalmers University), Edward Rosten (Snap Inc.), Oliver J. Woodford (Snap Inc)

Keywords: Optimization Methods

Abstract:

Non-linear least squares solvers are used across a broad range of offline and real-time model fitting problems. Most improvements of the basic Gauss-Newton algorithm tackle convergence guarantees or leverage the sparsity of the underlying problem structure for computational speedup. With the success of deep learning methods leveraging large datasets, stochastic optimization methods received recently a lot of attention. Our work borrows ideas from both stochastic machine learning and statistics, and we present an approach for non-linear least-squares that guarantees convergence while at the same time significantly reduces the required amount of computation. Empirical results show that our proposed method achieves competitive convergence rates compared to traditional second-order approaches on common computer vision problems such as essential/fundamental matrix estimation with very large numbers of correspondences.

SlidesLive

Similar Papers

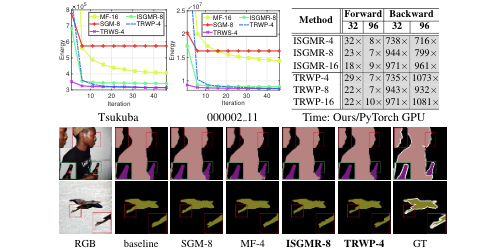

Fast and Differentiable Message Passing on Pairwise Markov Random Fields

Zhiwei Xu (Australian National University)*, Thalaiyasingam Ajanthan (ANU), RICHARD HARTLEY (Australian National University, Australia)

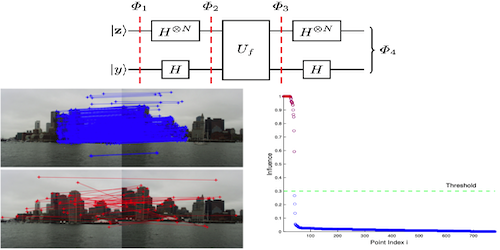

Quantum Robust Fitting

Tat-Jun Chin (University of Adelaide), David Suter (Edith Cowan University), Shin-Fang Ch'ng (The University of Adelaide)*, James Quach (The University of Adelaide)

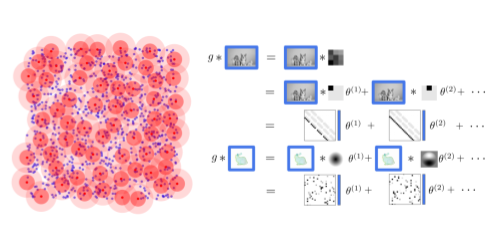

Sparse Convolutions on Continuous Domains for Point Cloud and Event Stream Networks

Dominic Jack (Queensland University of Technology)*, Frederic Maire (Queensland University of Technology), SIMON DENMAN (Queensland University of Technology, Australia), Anders Eriksson (University of Queensland )